Unstructured Data Processing: How LLMs Unlock the Missing 90% of Business Context

August 28, 2025 - 15 min read

This article includes insights from Marko Pohl, an AI transformation expert with over 15 years of experience building and scaling digital solutions and data infrastructures across Europe, MENA and Southeast Asia.

For decades, companies gathered and structured as much data as possible to make better decisions, improve products, and provide better customer experiences. Organisations became quite adept at handling structured data, funneling it to dashboards, and enriching processes with it.

However, most of the data remained untouched. It was fairly easy to work with data that comes in rows and columns and adheres to a specific format and naming conventions. But what if your data is a batch export of 10,000 emails or a folder with scanned receipts for the entire year?

Working with structured data is like building a snowman, while unstructured data is like shoveling snow from your driveway in a snowstorm. A few years ago, no one wanted to deal with unstructured data as there were no widely available tools for organizing and processing it.

But, everything changed with the emergence of AI. Today’s LLMs have two features that make unstructured data essential:

- LLMs thrive on context: the more data you feed them, the better their output.

- They are highly effective at interpreting large volumes of unstructured data.

In this article, we’ll explore what unstructured data is, how it differs from structured data, how organisations manage it today, and the role AI plays in unlocking its value.

What is Unstructured Data and Why It Became so Important Today?

“Unstructured data is everything within a company that does not sit in a structured database. It represents the business’s everyday knowledge, activities, and interactions that are not captured in a predefined format.”

— explains the concept Marko Pohl.

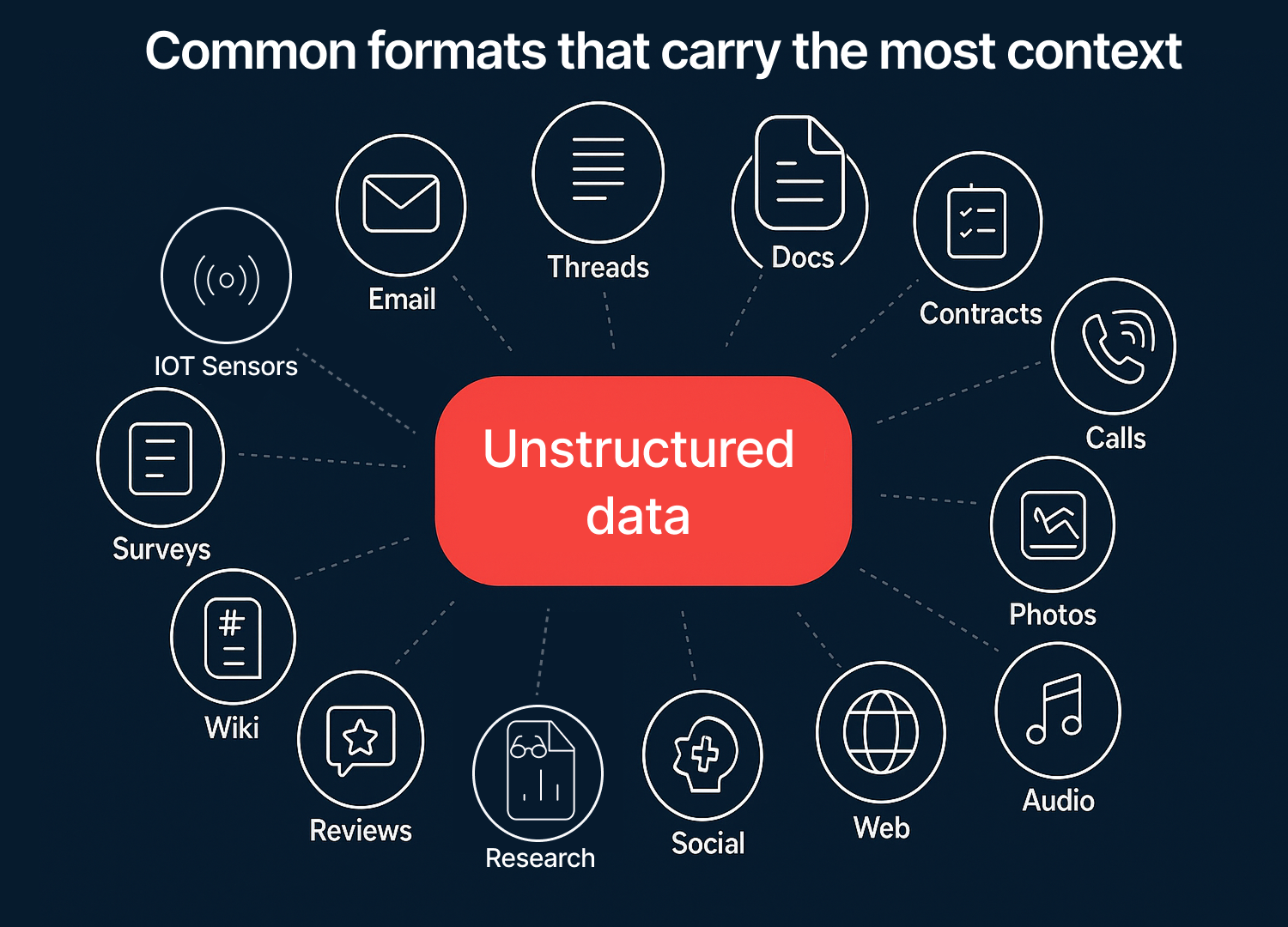

In other words, unstructured data is all the documents, communications, recordings, and digital assets your business produces. It comes in various formats, such as audio, text, images, or video.

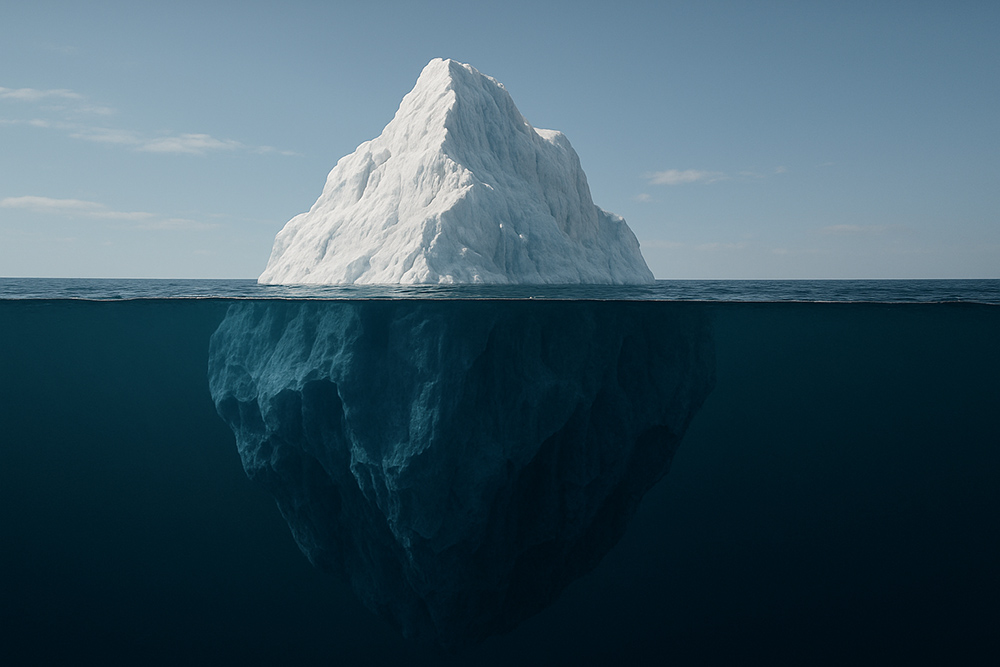

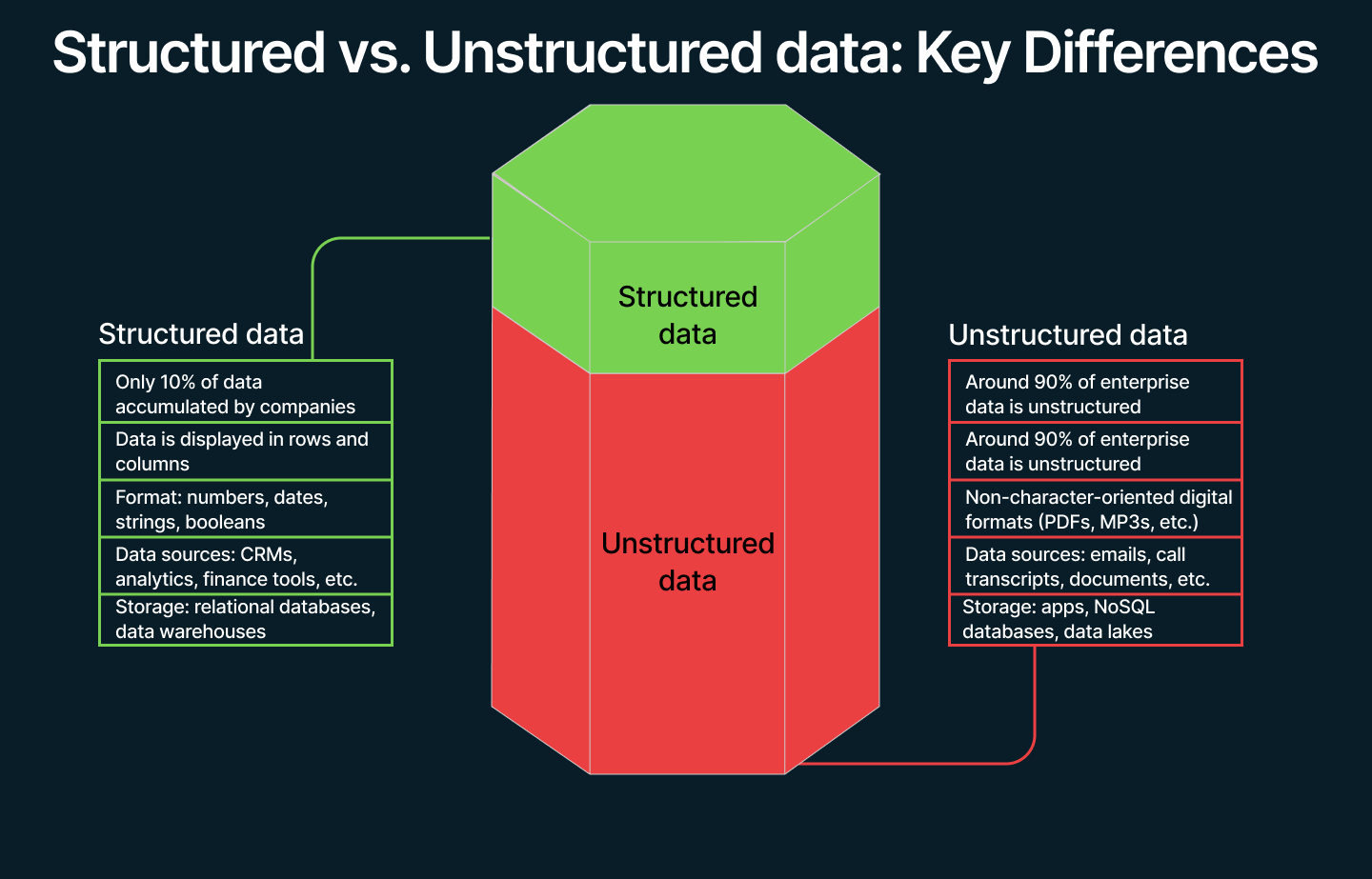

💡Research suggests that unstructured data accounts for 90% of all data generated. This means that organisations working exclusively with structured data only capture about 10% of the available business context.

Unlike structured data (rows and columns with a fixed schema), unstructured data has no predefined model and often carries richer context, such as intent, nuance, and reasoning. That context is exactly what modern AI systems need, and what dashboards historically ignored.

Why it matters

Why it matters

The volume of unstructured data is both immense and highly valuable

IDC forecasts that unstructured data will grow at around 30% CAGR over the next five years.

At this pace, unstructured data will soon surpass structured data in scale, leaving organisations that rely solely on structured data at a disadvantage. Ignoring unstructured data effectively sidelines most of an organisation’s institutional knowledge.

The infrastructure has matured

Cloud object storage has evolved to support unstructured workloads. Object storage dominates AI workloads generating 51.2% of the total cloud storage market, and it’s predicted to increase at 25% CAGR by 2030.

Over the past several years, object-based storage has advanced significantly, adding default encryption, bulk management features, and ultra-low-cost archival tiers

These new capabilities lower the barrier to centralising and processing content at petabyte scale.

AI can read unstructured data

Today’s LLMs can easily interpret text, audio, and images. The processing bottleneck has shifted from model capability to making unstructured data findable, trustworthy, and safe to use.

Before the widespread availability of LLMs, the processing of unstructured data was much more tedious. Even with technologies like OCR (Optical Character Recognition) working with large sets of documents was slow, inefficient, and difficult to scale.

“OCR is a good example. The technology was difficult to scale and never matured enough to be applied broadly across organisational processes. When an OCR system encounters a missing character, an entire word or sentence can become unreadable.

With LLMs and image recognition, the approach is fundamentally different: the system interprets the image, understands the context, and infers missing elements.”

— says our invited expert.

Each new model release is setting new benchmarks in document understanding. Claude 3.5 Sonnet reports 95.2% ANLS* on document Q&A and 90.8% on Chart Q&A (ANLS* – A Universal Document Processing Metric for Generative Large Language Models).

Costs have fallen to the point where processing everything is now feasible

GPT-5 prices are $1.25 per 1M input tokens. For GPT-5 mini and nano the prices are as low as $0.25 and $0.05 per 1M input token respectively. Considering that even the mini model was more than enough for processing the majority of documents and screenshots, the cost makes bulk parsing, classifying, and summarizing large amounts of data economically feasible.

What’s the ROI of Unstructured Data?

Before LLMs there was no incentive for companies to invest in unstructured data as there were no effective means of organizing and processing it.

What has changed is not only model capability, but how that capability aligns with core business processes. Boards are demanding visible returns quickly, so the most valuable initiatives are those that directly impact the core business processes, not background operations.

What has changed is not only model capability, but how that capability aligns with core business processes. Boards are demanding visible returns quickly, so the most valuable initiatives are those that directly impact the core business processes, not background operations.

So where does this return actually come from?

While the main focus should remain on core service offerings, applying AI to secondary functions can also deliver results. At the end of the day, profit always comes down to revenue and cost.

While the main focus should remain on core service offerings, applying AI to secondary functions can also deliver results. At the end of the day, profit always comes down to revenue and cost.

If you invest in specific AI use cases and apply unstructured data to reduce the cost of mundane, time-consuming processes, you can generate an edge because you generate cash flow that can be reinvested into other processes.

However, value from “nice-to-have” utilities, such as AI helpdesk, meeting summaries, and others is often limited. They are great to buy as SaaS features, but not worth building from the ground up. Automation of secondary processes rarely justifies building custom data ingestion and enrichment pipelines, as they do not provide true differentiation and your competitors will likely adopt similar solutions.

Structured vs. Unstructured Data

Historically, companies invested heavily in structured data processing and infrastructure, such as ETLs, data warehouses, transformation tools, and connectors to various data

“Investments in structured data had clear use cases. For example, CRM systems were seen as a direct way to increase revenue: ‘We’re investing here because it improves our sales process and drives revenue.’ The path to value was usually obvious, which both excited companies and justified the investment.”

— says Marko.

By contrast, processing unstructured data was far more challenging and lacked clear returns.

Today, the investment approach for unstructured data differs from how companies invested in structured data.

Instead of building large, company-wide infrastructure upfront, organisations are prioritising specific use cases that demonstrate immediate business value and directly impact the profit and loss statement.

The current macroeconomic landscape also plays a role. Many companies simply lack the cashflow to support initiatives that do not deliver immediate returns. It is therefore unlikely that companies will build unstructured data pipelines before identifying clear use cases.

However, the way companies treat and invest in these two types of data is not the only difference. Let’s examine some of the other, more in-depth distinctions between them.

Format differences

Structured data exists within a predefined schema, typically rows and columns with typed fields (e.g. customer_id: int, signup_date: date). It’s designed for querying, whether as CSVs loaded into relational tables or columnar formats with fixed schemas.

Unstructured data has no fixed shape when captured: emails, chat threads, PDFs, images, call recordings, receipts, etc. It is rich in context but highly variable, differing in length, modality, naming conventions, and often containing embedded tables or images within documents.

Usage differences

Structured data answers questions such as ‘How many?’, ‘How often?’, ‘What is the status?’. It powers reporting tools, KPIs, alerting systems, and real-time analytics. This follows a schema-on-write workflow: design the model first, then load the data and query it with SQL

Unstructured data answers questions such as ‘Why?’, ‘How?’, ‘What changed?’, ‘What was decided?’ It supports assistants and agents that retrieve, summarize, classify, and reason across documents, messages, and media. This follows a schema-on-read workflow: ingest first, then enrich and index the data, before using retrieval-augmented generation (RAG) or task-specific classifiers to produce clean outputs.

Storage differences

Structured data is optimised for databases and warehouses: relational stores for OLTP; columnar warehouses for analytics. These systems provide indexes, constraints, and well-defined governance at the table and column level.

Unstructured data is best stored in object storage (S3/GCS/ADLS) and lakehouse tables. Objects hold the raw files (PDF, PNG JSON, etc.) while catalogues and open table formats add versioning, schema evolution, and semantics when materialising structured views. Vector indexes and search services make data discoverable.

Unstructured Data Sources: Where Does it Originate?

Sources of unstructured data vary across companies and depend heavily on the workflows where each organisation seeks to apply AI.

However, before we dive into the specifics, it is worth addressing unstructured data sources common to every organisation:

- Emails: plain text exports, attachments, and email threads.

- Documents: reports, contracts, articles, research papers, and other context-rich text files.

- Customer interactions: service call transcripts, surveys, and feedback forms.

- Real-time data: outputs from IoT devices, sensors, smart gadgets, and industrial equipment.

- Multimedia: photos, illustrations, videos, podcast recordings, voice messages, and even music.

- Social media: posts, comments, and shared images.

- Website: blog posts, landing page content, customer reviews, and images.

This list is not exhaustive, and the sources you prioritise will depend on your objectives with AI and unstructured data.

Many discussions of unstructured data sources overlook one of the most crucial: internal knowledge.

“When an employee has been performing a task for years, they accumulate knowledge about the most efficient way to accomplish it. If that knowledge is undocumented, organisations may need to capture it through interviews, asking questions such as ‘Why do you approach it in this specific way?

Often, this context remains in employees’ heads, as previously there was little practical reason to capture it.”

— explains our domain specialist.

The availability of this information often depends on the scale of the organisation. Large organisations most likely have manuals and playbooks outlining the fundamentals of key processes in the company.

For SMEs, the situation is often different, as they rely more on team knowledge and historically had little reason to document processes. Expertise is passed on during onboarding, where experienced team members train newcomers.

Capturing the context of onboarding meetings and knowledge-sharing sessions is crucial for enabling AI workflows.

Unstructured Data Quality

Data quality has long been a challenge for those working with structured data. Inconsistent naming conventions, duplicate records, and incorrect field mapping often resulted in flawed outputs from analytics and BI systems.

Data transformation and cleaning are essential parts of any structured data pipeline because these data feed into model training sets, executive dashboards, and analytics tools that shape company-wide decision-making.

But what about unstructured data? Does quality remain an issue when working with it?

“With unstructured data the quality is all about the clarity of the audio file or image. The reliability of unstructured data depends on the equipment that captures that data, for example microphones used during a call or the camera that captures the receipt.

On the transformation side, the process has become much easier. Now, LLMs can understand the context and fill the gaps. So, if the image is blurry and some characters aren’t visible, LLMs can infer missing characters based on the surrounding context.”

— says our advisor.

Therefore, when capturing unstructured data, it is important to invest in reliable equipment to ensure the highest possible data quality.

Using metadata to monitor unstructured data quality

Investing in recording equipment is only part of the solution. To effectively manage unstructured assets, teams can apply the same data quality dimensions used for structured data, and use metadata to monitor them.

The key dimensions of data quality include:

- Accuracy: The asset faithfully reflects the source with minimal errors.

- Relevance: The asset directly serves the intended task or user question.

- Completeness & Comprehensiveness: All required elements are present and the content covers the topic sufficiently to act on it.

- Timeliness: The information is fresh enough for today’s decisions and clearly versioned.

- Consistency: The same concepts are represented the same way across documents, systems, and time.

- Uniqueness: The asset isn’t a duplicate or near-duplicate and adds a new signal.

- Interpretability: Humans and machines can understand the language, structure, and intent without guesswork.

- Validity: The file and its metadata conform to expected formats, policies, and constraints (including security/PII rules).

So how can these quality dimensions be monitored effectively?

1. Ingestion and Provenance Capture

When a file lands is ingested into storage, an asset_id needs to be assigned along with basic information:

- Provenance: file source, path/URL, ingested_at (date)

- Validity: File type, encoding

- Timeliness:source_last_modified (the last timestamps from the original system of record indicating when the asset was last modified), version

2. Make files machine-readable

Run your files through LLMs:

- GPT-4 / 5 Vision for scans and images, Whisper for audio

- Extract text, language, headings, page count / audio duration

- Chunk long items by sections or size to simplify future retrieval

Now, your asset can be researched, summarized, and compared, both by humans and models.

3. Capture the eight quality signals

The goal is to evaluate each asset against key quality criteria and capture the results as metadata.

- Accuracy: “Can this asset be trusted?”

Ask the model to fact-check a document against your own trusted documents (policies, contracts, playbooks).

Metadata records: accuracy.findings - list of flagged assets; accuracy.confidence: high / med / low.

Only assets assessed as high accuracy should be used.

- Relevancy: “Does the asset relate to the target task?”

Label whether an asset is about the target task / use case; add a short reason.

Metadata records: topic = support_policy and relevant = true.

Future workflows should draw only on assets marked relevant for that topic

- Completeness: “Are any required elements missing?”

Compare the asset against a reference document to verify that all required sections or fields are present. Flag the missing sections.

Metadata records: has_required_sections = true / false; pages = 12; attachments = 2.

- Timeliness: “How fresh is the asset?”

Keep track of the date the asset was added and how long it hasn’t been touched. Metadata records: source_last_modified=2025-08-21, staleness days=3 (increases by one with each day the asset is inactive), version = 1, expires on = 2025-12-01

- Consistency: “Are formats and units standardized?”

Make everything look and read the same way across documents so there’s no confusion over different spellings, date styles, units, or names. Feed all consistency parameters to your model and perform consistency checks. Record conflicts as JSON and then either resolve them manually or ask you LLM to normalize data.

Metadata records: date_format = “ISO-8601”, unit_system = “metric”, etc.

- Uniqueness: “Is this a duplicate?”

Keep track of duplicate content, either with AI or manual checks.

Metadata records: duplicate_group_id. Assign all duplicate content to the same groups. Place Null if content is unique.

- Interpretability — “Is it easy to read/listen/view?”

Make each asset clear for humans and AI: add structure (title, headings, page numbers), create transcripts with timestamps and speaker labels for audio/video, add short captions/alt text for images, and split long content into sensible chunks. If a scan is skewed or audio is noisy, fix it, or flag it so your workflows can down-rank or route it for cleanup.

Metadata records: language = "en", readability = good / ok / poor, layout_ok = true/false

- Validity: “Does it conform to format, policy, and legal constraints?”

Make sure each asset opens, is safe, and is allowed to be used the way you intend. That means: the file parses without errors, has a recognized format/encoding, comes from a trusted source, and complies with your policies (PII handling, confidentiality, retention, region/legal restrictions, licenses/consents). For signed docs (e.g., PDFs), verify the digital signature; for anything with sensitive data, record whether redaction is required before use.

Metadata records: validity.parse_ok = true/false, validity.mime = "application/pdf", validity.encoding = "utf-8", and more.

5. Enforce metadata usage in the workflow

Integrate metadata into the points that matter. For example, here are the conditions that must be met before using an unstructured data asset:

- Retrieval gates: serve only chunks where PII=false, staleness_days < 180, accuracy.confidence = high, relevancy.score ≥ 0.8

- Governance: retention and access from classification, time-to-live_days, policy.region

- Routing: Route policy docs to the support bot; pricing decks to sales assist; everything else to search only.

For unstructured data workloads, see 17 data engineering companies experienced with large-scale ingestion.

How LLMs Have Changed the Unstructured Data Processing Landscape

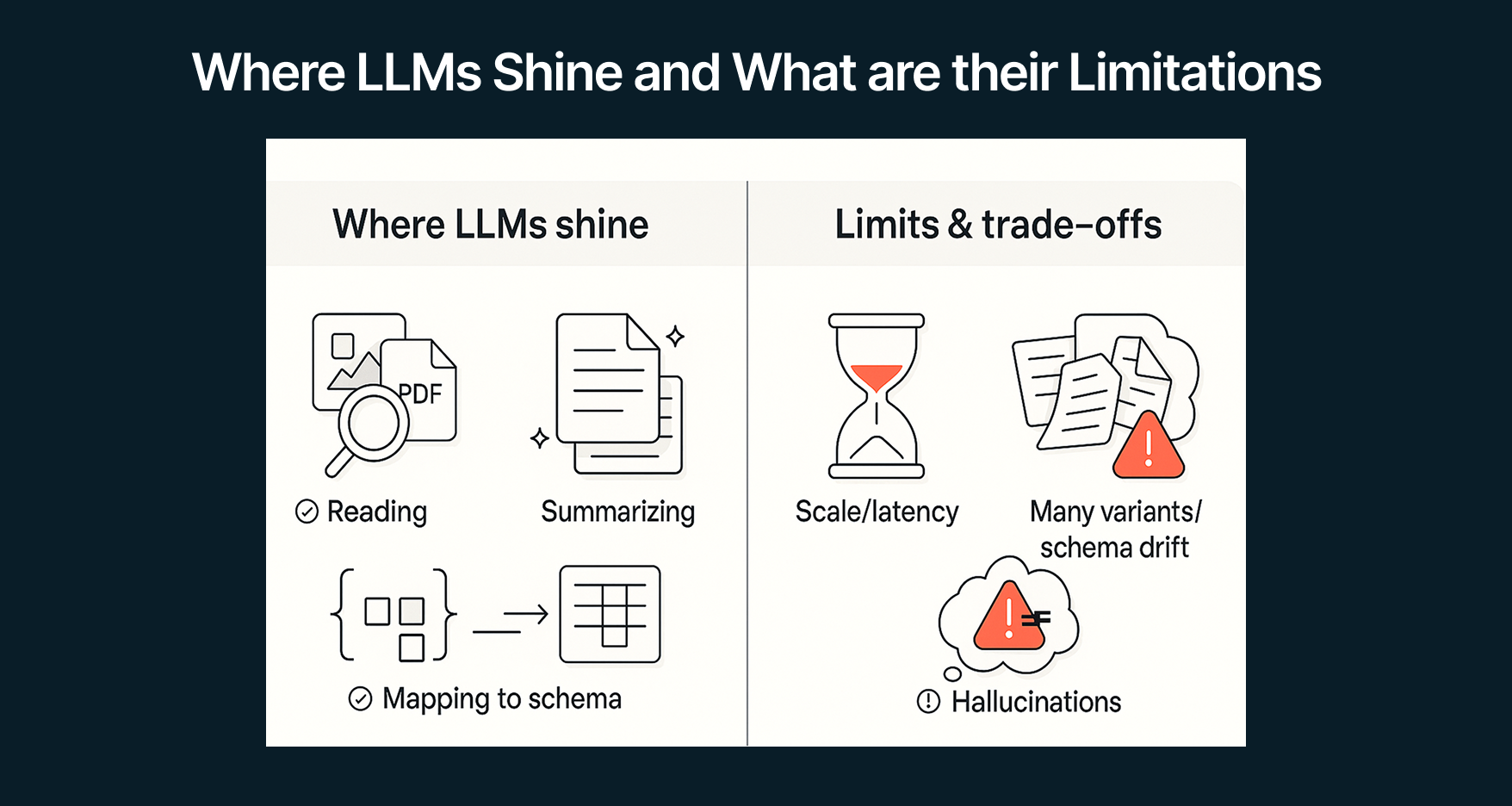

We have established that LLMs broke the unstructured data stalemate. But how exactly have AI and LLMs changed the landscape and are they the silver bullet for unstructured data processing?

The answer is twofold. On one hand, yes, because now, instead of hand-crafting rules for every template, LLMs can read messy PDFs, emails, images, and transcribe calls, then bridge that content into your existing data stack. They act as a universal converter, transforming unstructured inputs into structured outputs that data warehouses and BI tools can actually use.

On the other hand, they’re not magic. There are some practical limits that still matter:

- Scale and latency. Unstructured data assets may amount to terabytes. LLMs are slow and costly relative to that volume, and their context windows are still tiny compared with the data that needs to be processed in one go. So, there’s still a a strong need for pipelines that batch, cache, and selectively retrieve your data assets.

- Unlimited variants and business logic. For LLMs the hard part isn’t “read a document”, it’s “map thousands of similar versions of content to one stable schema”. For example, if the organization gets sales contracts from hundreds of vendors, none of them might be alike. The LLM should consistently map them to the same JSON schema, which is a very complicated task.

- Hallucinations. Hallucinations persist and can’t be reliably detected at scale. The pragmatic safeguard is dual-model checks plus human review, with the trade-off of higher operating costs.

In practice, this means:

- Teams need to use the right tools for their use case. For “easy” docs where classic OCR/NLP/IDP already hits your SLA, stick with it for cost and latency reasons; bring LLMs in when language is complex, page counts are high, or when some content is unrecognizable and you need LLMs to enrich it.

- Keep your schema (and metadata) in charge. Even with LLMs, ETL is still the bridge: define a clear schema, normalize terms/units, record provenance, and write quality metadata so downstream systems can trust the outputs.

For companies that are just starting out with AI and unstructured data processing, the philosophy is simple.The use case must come first.

Identify the Right Use Case that Drives AI ROI with Vodworks’ AI Readiness Package

Now that you’re familiar with a framework that turns AI from a cost centre into a compounding asset, the obvious next question is how to convert that playbook into a month-by-month execution plan without stalling day-to-day operations.

That is exactly what the Vodworks AI Readiness Package delivers. We begin with a Use-Case Exploration sprint in which we pressure-test candidate ideas against business value, data availability, and expected payback period.

By the end of the workshop, you will have preliminary insights and a shortlist of AI opportunities already sized for ROI potential.

Next, our architects dive deep into data quality, infrastructure robustness, and organisational readiness. The deliverables, a data readiness report, an end-to-end infrastructure map, and an organisational assessment, give you a crystal-clear view of where friction hides and what it will cost to remove.

At the end, we translate findings into a step-by-step improvement plan that ladders every fix to the ROI timeline of the chosen use cases. You receive a board-ready PDF presentation, an actionable roadmap, and tool-chain recommendations aligned to your budget cycle.

Prefer hands-on delivery? The same specialists can stay to clean data, modernise pipelines, and operationalise models, so your first production use case ships on time, within guard-rails, and already reports ROI to the dashboard.

Book a 30-minute discovery call with a Vodworks AI solution architect to review your data estate and understand your expectations from AI.

Talent Shortage Holding You Back? Scale Fast With Us

Frequently Asked Questions

How do you handle different time zones?

With a team of 150+ expert developers situated across 5 Global Development Centers and 10+ countries, we seamlessly navigate diverse timezones. This gives us the flexibility to support clients efficiently, aligning with their unique schedules and preferred work styles. No matter the timezone, we ensure that our services meet the specific needs and expectations of the project, fostering a collaborative and responsive partnership.

What levels of support do you offer?

We provide comprehensive technical assistance for applications, providing Level 2 and Level 3 support. Within our services, we continuously oversee your applications 24/7, establishing alerts and triggers at vulnerable points to promptly resolve emerging issues. Our team of experts assumes responsibility for alarm management, overseas fundamental technical tasks such as server management, and takes an active role in application development to address security fixes within specified SLAs to ensure support for your operations. In addition, we provide flexible warranty periods on the completion of your project, ensuring ongoing support and satisfaction with our delivered solutions.

Who owns the IP of my application code/will I own the source code?

As our client, you retain full ownership of the source code, ensuring that you have the autonomy and control over your intellectual property throughout and beyond the development process.

How do you manage and accommodate change requests in software development?

We seamlessly handle and accommodate change requests in our software development process through our adoption of the Agile methodology. We use flexible approaches that best align with each unique project and the client's working style. With a commitment to adaptability, our dedicated team is structured to be highly flexible, ensuring that change requests are efficiently managed, integrated, and implemented without compromising the quality of deliverables.

What is the estimated timeline for creating a Minimum Viable Product (MVP)?

The timeline for creating a Minimum Viable Product (MVP) can vary significantly depending on the complexity of the product and the specific requirements of the project. In total, the timeline for creating an MVP can range from around 3 to 9 months, including such stages as Planning, Market Research, Design, Development, Testing, Feedback and Launch.

Do you provide Proof of Concepts (PoCs) during software development?

Yes, we offer Proof of Concepts (PoCs) as part of our software development services. With a proven track record of assisting over 70 companies, our team has successfully built PoCs that have secured initial funding of $10Mn+. Our team helps business owners and units validate their idea, rapidly building a solution you can show in hand. From visual to functional prototypes, we help explore new opportunities with confidence.

Are we able to vet the developers before we take them on-board?

When augmenting your team with our developers, you have the ability to meticulously vet candidates before onboarding. We ask clients to provide us with a required developer’s profile with needed skills and tech knowledge to guarantee our staff possess the expertise needed to contribute effectively to your software development projects. You have the flexibility to conduct interviews, and assess both developers’ soft skills and hard skills, ensuring a seamless alignment with your project requirements.

Is on-demand developer availability among your offerings in software development?

We provide you with on-demand engineers whether you need additional resources for ongoing projects or specific expertise, without the overhead or complication of traditional hiring processes within our staff augmentation service.

Do you collaborate with startups for software development projects?

Yes, our expert team collaborates closely with startups, helping them navigate the technical landscape, build scalable and market-ready software, and bring their vision to life.

Our startup software development services & solutions:

- MVP & Rapid POC's

- Investment & Incubation

- Mobile & Web App Development

- Team Augmentation

- Project Rescue

Subscribe to our blog

Related Posts

Get in Touch with us

Thank You!

Thank you for contacting us, we will get back to you as soon as possible.

Our Next Steps

- Our team reaches out to you within one business day

- We begin with an initial conversation to understand your needs

- Our analysts and developers evaluate the scope and propose a path forward

- We initiate the project, working towards successful software delivery